How Basic Performance Analysis Saved Us Millions

This is the story of how I applied basic performance analysis techniques to find a small change that resulted in a 10x improvement in CPU use for our Postgres cluster and will save Heap millions of dollars over the next year.

Indexing Data for Customer Analytics

Heap is a customer analytics tool that automatically captures every user interaction with your website or app. Once installed on a website, Heap will automatically track every pageview, click, form submission, and more. From there, the owner of the website can use Heap to perform many different kinds of aggregations over different subsets of the raw data.

In order to make it possible to get insights out of this data, Heap lets users define events in terms of the raw data. An example might be a “Login”, which could be defined as a “form submission on the /login page”.

To make analyses fast, we use a very unusual indexing strategy which relies on Postgres’ partial indexing feature. A partial index is like a normal Postgres index, except it only contains rows that satisfy a specified predicate. You can think of it like a regular index with a WHERE clause. For every event definition one of our customers creates, we create a partial index on that customer’s raw event data, restricted to the rows which match the definition. Whenever a new row is inserted into our events table, Postgres will automatically test the event against the predicate of each partial index on the table and add the row to the necessary indexes.

For each event definition, the corresponding partial index makes it very fast to retrieve all matching events because the index contains exactly the events that satisfy the definition.

Problem: Unusually High CPU Usage

When we first rolled out this indexing strategy, our CPU use was significantly higher than it was with our previous indexing strategy. This made sense, we thought: our largest customers have thousands of these indexes and in order to support filters based on CSS selectors, lots of these partial indexes contain a regular expression filter. We thought that since regular expressions are fairly expensive to evaluate, it only made sense that testing a thousand regexes against every event as it was inserted would cause Postgres to use a ton of CPU. There was no real evidence this was the case, but it became the explanation everyone at Heap gave for why Postgres used so much CPU. We assumed it was a fundamental tradeoff of the indexing strategy.

Around October, as our data volume continued to increase, we started having issues ingesting all of the data coming in during peak hours. On some days it would take hours for a new event to show up in the Heap dashboard. This is completely unacceptable for a tool meant for real time analytics. Instead of going the typical route and throwing money at the problem, I thought I would try my hand at optimizing Heap’s ingestion throughput.

Visualizing CPU Use with Flame Graphs

Prior to this I had limited experience debugging performance issues. After googling for a bit, I came across one of Brendan Gregg’s posts on flame graphs. A flame graph is a type of visualization Brendan Gregg invented as a way to quickly identify which parts of your code are taking up CPU. The first step in creating a flame graph is to take samples of the stack of the process using the Linux perf tool:

perf record -p $(pid of process) -F 99 -g -- sleep 60

This will sample the stack of the given process at 99 times a second for 60 seconds and write the data to a file called perf.data. From there, you can run the following commands from Brendan Gregg’s flame graph library to process the file and generate a flame graph:

perf script | ./stackcollapse-perf.pl > out.perf-folded ./flamegraph.pl out.perf-folded > flame-graph.svg

One of the first flame graphs I created was of a Postgres backend process. Due to our use of connection pooling, a single backend process will serve multiple queries. Since the vast majority of queries we run are INSERTs, a flame graph of a Postgres backend process would give us a good idea of where the CPU was spent when inserting events into the database. After running the above commands on a pid for a Postgres process I got from pg_stat_activity, I obtained the following flame graph:

You can click the image to open it in a new tab, then click a rectangle to zoom in. Hovering over a rectangle will pull up some information about the rectangle. For the uninitiated, a flame graph can be pretty difficult to understand. Brendan Gregg gives the following explanation for how to interpret one:

The x-axis shows the stack profile population, sorted alphabetically (it is not the passage of time), and the y-axis shows stack depth. Each rectangle represents a stack frame. The wider a frame is is, the more often it was present in the stacks. The top edge shows what is on-CPU, and beneath it is its ancestry. The colors are usually not significant, picked randomly to differentiate frames.

It’s pretty clear from the flame graph that ~55% of CPU time is spent in ExecOpenIndices (the large yellow bar in the center right of the image). Looking up the flame graph a tiny bit, it appears that most of the time is split between two different functions, BuildIndexInfo and index_open. BuildIndexInfo calls into RelationGetIndexPredicate where ~20% of all CPU time is spent. It looks like the majority of that time is spent in RelationGetIndexPredicate.

Looking into the source code for RelationGetIndexPredicate, it appears its purpose is to parse and optimize a partial index predicate. It makes sense that so much time is spent in RelationGetIndexPredicate since parsing an arbitrary expression is much more difficult than evaluating an already parsed expression.

Now let’s look at the rest of the time spent in ExecOpenIndices. Most of the remaining time is spent in index_open. It looks like index_open calls into relation_open which then calls into RelationIdGetRelation. From the documentation of RelationIdGetRelation in the source code, its purpose is to lookup the metadata for different relations. (In this case it is mainly being used for looking up the partial indexes.) Based on how the time is spent in RelationGetIndexPredicate and RelationIdGetRelation, it appears that Postgres spends a lot more time fetching and parsing the partial index predicates than it does evaluating them.

Implementing a Fix

Looking at the source code for these different functions, there is a significant amount of caching going on. In RelationGetIndexPredicate, Postgres first checks if it has already extracted the predicate and immediately returns it.

RelationIdGetRelation first uses RelationIdCacheLookup to check if the relation metadata has already been calculated and cached. It appears that under normal circumstances, the index metadata would be fetched and parsed once, and then read from cache the rest of the time.

Unfortunately for us, the caching doesn’t work well if you’re writing events one at a time to tens of thousands of different tables. Postgres has a pool of processes that it uses to serve queries, and each of these processes keeps its own cache. Every insert is assigned round-robin amongst these processes. When inserting events one at a time, to a sharded schema with tens of thousands of underlying tables, it is unlikely that two inserts going to the same table will be served by the same process. This means that index metadata is almost never cached in the process that’s executing the insert. So, Postgres needs to fetch and parse the index metadata for the destination table once for almost every event we insert.

This suggests a simple change we could make: instead of inserting all of the events individually, we could batch insert many events going to the same table. By using a single command to insert many events, Postgres would only need to fetch and parse the index metadata once per batch. We had thought of batching our inserts before to reduce transaction counts, but never to save CPU resources, as we assumed all the CPU was going towards evaluating index predicates.

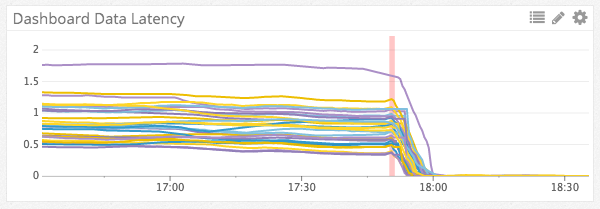

Initial benchmarks of batched inserts showed a 10x reduction in CPU usage. Once we obtained these results, we began testing the batched inserts in production. Ultimately, we did get about a 10x improvement to ingestion throughput when using batches of an average size of ~50 events. Here is what our ingestion latency for different kafka partitions looked like right before and after we deployed batching:

The unit on the left is hours of latency. We were able to clear about an hour of backlog in only minutes.

After deploying batching, I took another flame graph of inserts:

This time, it appears a large portion of the time is now going to ExecQual (red bar in the middle), which based on the source code, is the function used to evaluate partial index predicates. That means Postgres is now spending most of the CPU doing the actual work of evaluating partial index predicates.

I made this discovery six months ago. Since then, we haven’t needed to add any additional CPU to our cluster and it doesn’t look like we will need to in the next few months either! I was able to find this win using only rudimentary performance analysis techniques. It really doesn’t take much to find 10x wins.

By the way, if you are interested in doing this kind of work, we are hiring! Apply here or reach out on twitter.

Additional resources: